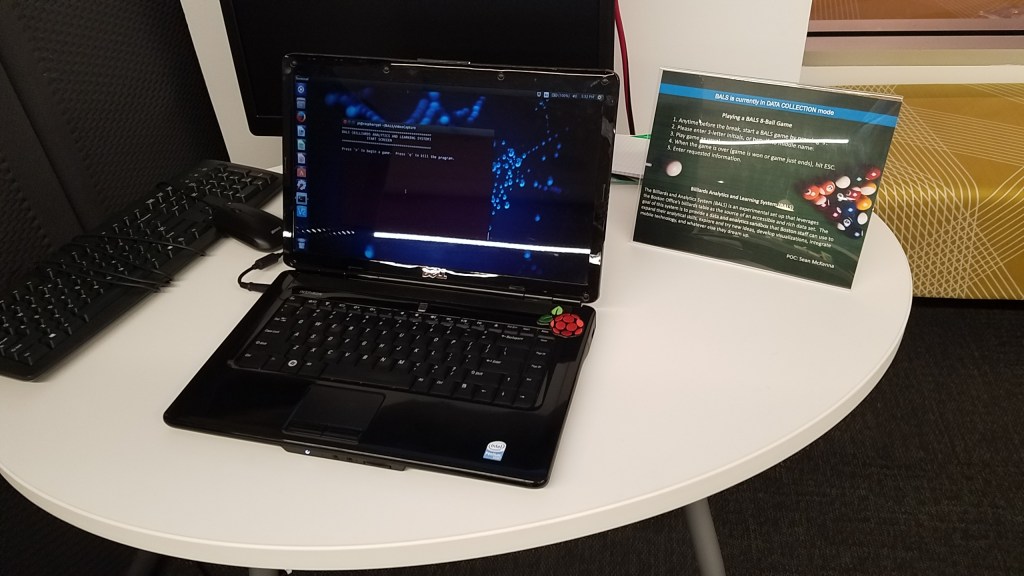

In 2017, I had just gotten a Raspberry Pi and was looking for something fun to do with it. At the time, I was working in downtown Boston on the 20th floor in a tricked out office space, replete with cornhole and foosball and pool tables. The pool table caught my eye and the wheels started spinning.

I ended up building BALS, the Billiards Analytics and Learning System. The idea was to provide a data and analytics sandbox that office staff could use to expand their analytical skills, explore and try new ideas, develop visualizations, integrate mobile technology, and whatever else they dreamed up,

Some of the things I considered as I tried to figure out how to make BALS work included:

- How to mount a camera at ceiling height in a minimally invasive and inconspicuous way

- It would need power and connectivity (wired or wireless)

- How would the video data get stored and where

- Would a webcam work?

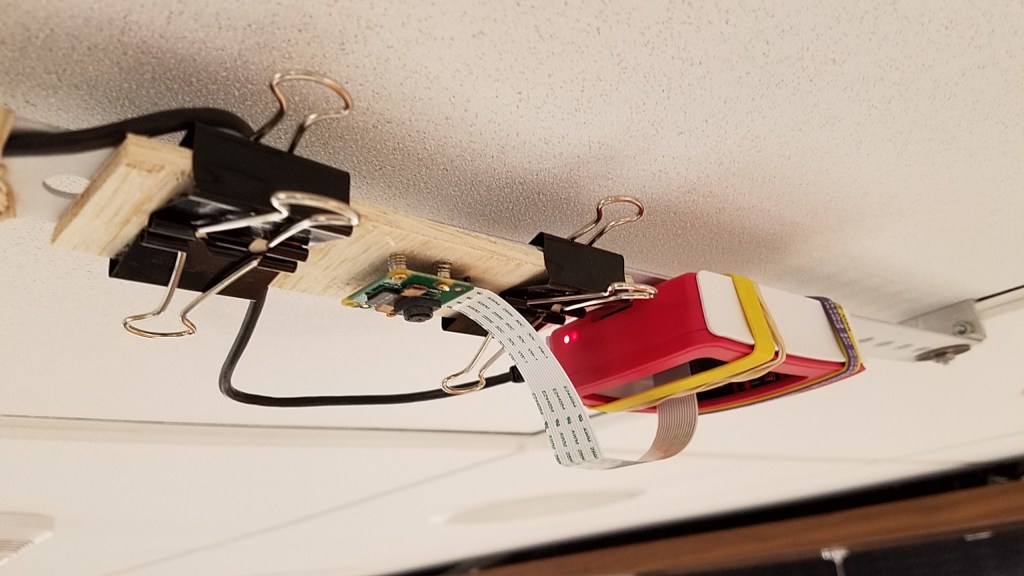

One Saturday, I went in to the office to do some prototyping. Armed with some 2x4s, C-clamps, and bungee cords, I improvised a crude, but functional rig. As for the video, I ended up using the stock Pi camera that captured just enough of the table at a resolution of 1640 x 922 (16:9)

The next hurdle was connectivity since the Pi was going to live on the ceiling. I needed to be able to SSH in to the (headless) Pi remotely from another machine (“base-station machine”). After a fair bit of research, along with some trial and error, I ended up with a wireless ad-hoc network between the Pi and an old laptop I had lying around. An ad-hoc network is a communication mode that allows computers to directly communicate with each other without network infrastructure (e.g., routers, access points). This also avoided being on the company network 😉 .

Figuring out how to best capture continuous video from the Pi was a bit challenging. I found the documentation to be commensurate with the $35 price point of the Pi. In the end, the camera was set up to record 1640 x 922 at 25 frames/sec. To avoid memory issues, the recording scheme dumped the recorded video every 60 seconds. The last image of a game was also stored for diagnostics. At 11PM each night, a cron job moved the collected video from the Pi to the base-station laptop. For each game, the follow metadata is gathered: Player initials, the player who breaks, the winner, and who played solids/stripes. The system went operational January 26th 2017 and recorded its first game at 8:15AM.

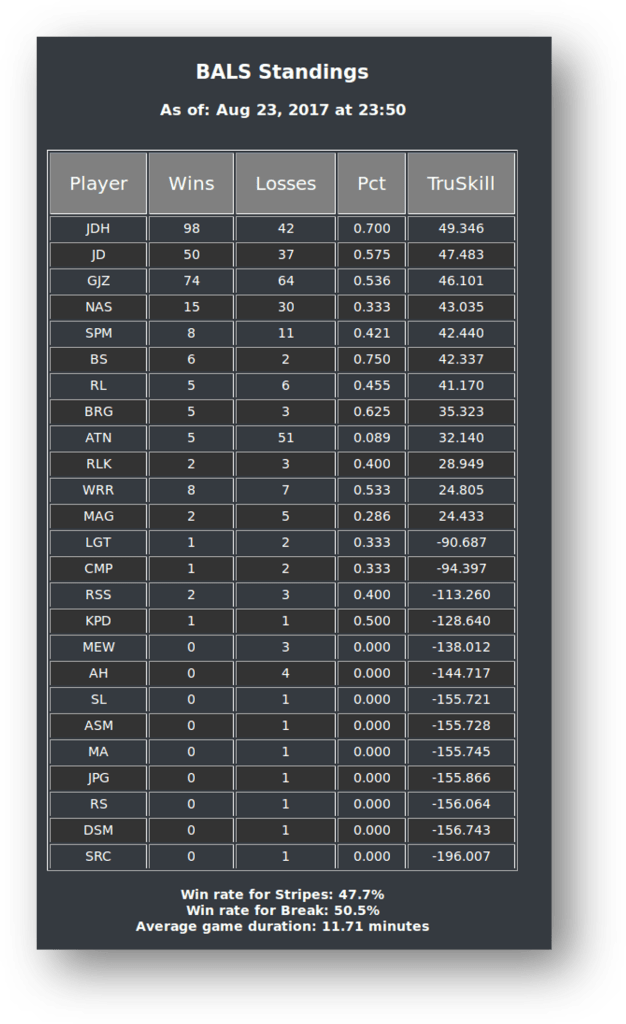

During the 2+ years that BALS ran, the base-station displayed the current BALS standings (updated each day), which showed wins, losses, win pct., and TrueSkill ranking. TrueSkill is a rating system among game players, developed by Microsoft Research; the system quantifies players’ “true skill” points by Bayesian inference algorithm (trueskill python package). It also reported:

- Win rate for stripes = 48.3%

- Win rate for break = 50.0%

- Average game duration = 11.6 minutes

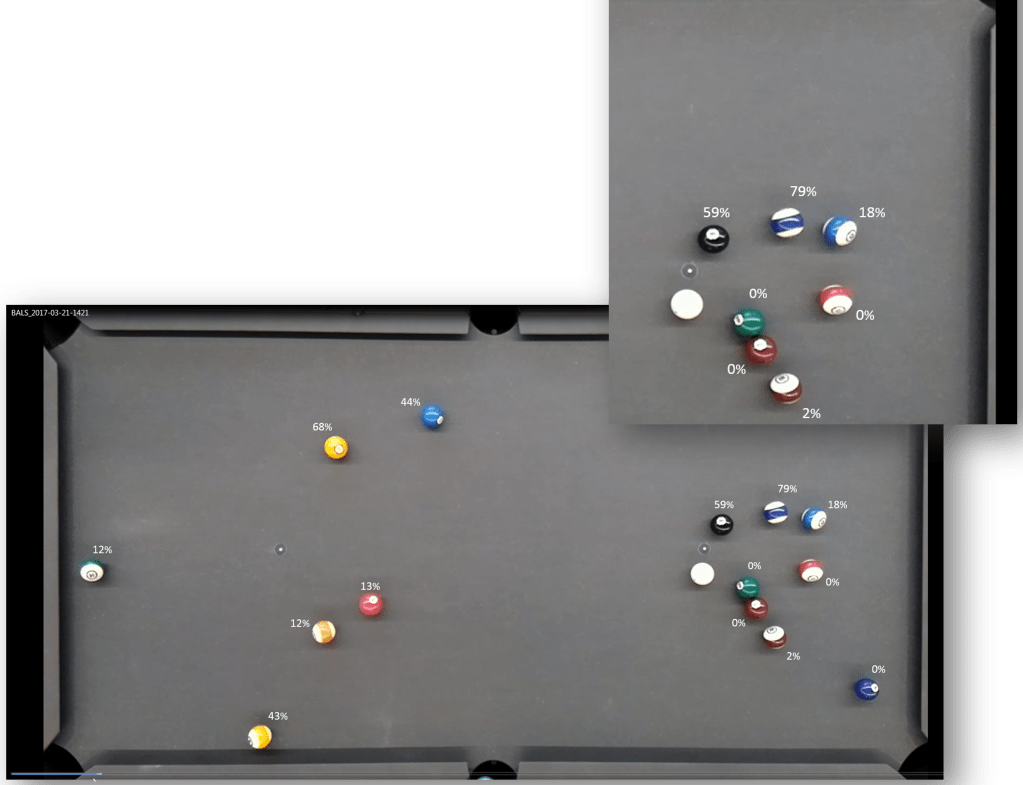

With over 700 games, one of the things I wanted to do was:

- Extract ball position data (x, y) and develop a set of features, together with the shot outcome (pocketed/not pocketed), to train a model

- Once the model is built/tested/ validated, the operationalized model would be used to annotate the resting table layout with a set of probabilities, one for each ball

- These annotations would reflect the probability that any ball, if selected as the target, will be pocketed

- The annotated image would be displayed on a nearby display

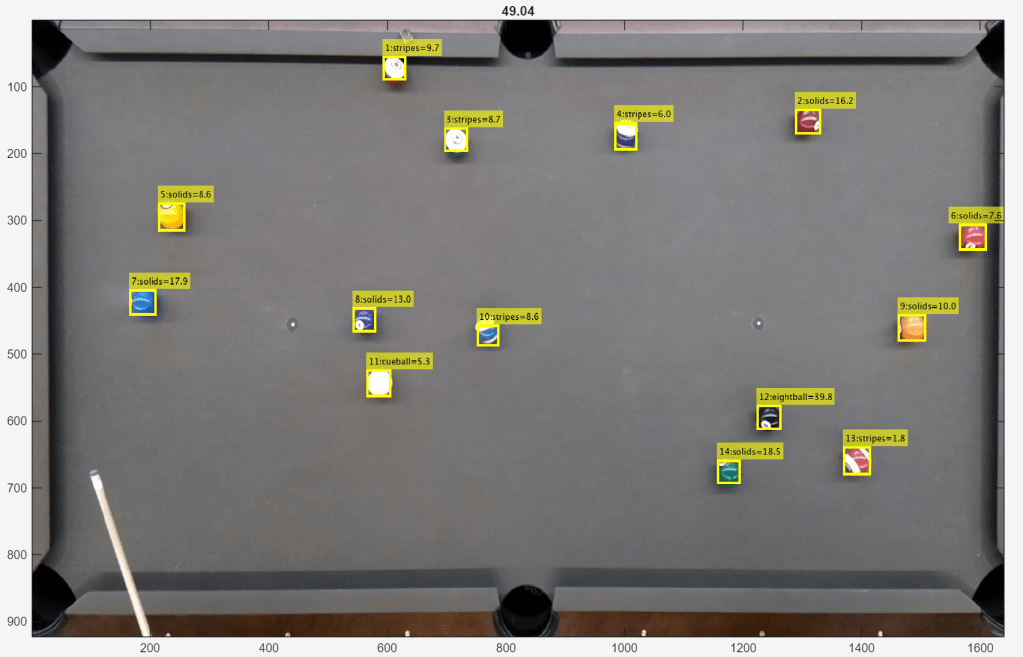

Fast-forward to 2026, and I finally got to working with the BALS data! Long story short, I was able to train, via transfer learning, a YOLOX deep learning model to identify and locate the billiard balls. This got me their (x, y) positions. Balls were classified as either stripes, solids, eightball, or cueball. The cue stick and rack were also classified. To train the YOLOX model, I needed labeled training images. To do this, I build my own labeler tool (in MATLAB, where I did all this analysis).

I wrote code to detect the presence of the racked balls and the moment of the break. From there, I alternated between using the YOLOX model to identify and locate the balls and a Kalman filter to track their motion. Also worth mentioning, I also trained a VGG deep learning model to act as a second pass over the detected balls to better classify their type.

Here’s a video of the entire sequence. From this sort of content, I started on my objective: Building the model to assign shot probabilities. And that’s where the stuff hit the fan. While the YOLOX model was excellent working with static balls, it struggled at times with balls in motion. The Kalman filter also had intermittent trouble. All this to say, I couldn’t process the game video with consistent results. [Sigh]

So as of now, the project is on hold. If anyone would like the BALS data or thinks they might be able to help with the shot probability project, please reach out!

Leave a comment